Most businesses are already experimenting with AI. Fewer are integrating it into operations in a way that improves output without slowing teams down. In this article, we discuss how you can introduce AI to your company.

Key Takeaways

- AI adoption becomes disruptive when it is layered on top of existing processes without alignment, and effective when it is part of how work already gets done.

- Adoption measures access, but proficiency measures capability, and most teams are not proficient enough to generate reliable, production ready output.

- Businesses should start with one process that is measurable and repeatable, then introduce AI as an assistive layer instead of a full replacement.

- Scaling AI requires measurable results, including time saved, error reduction, and output improvement, not just increased usage.

The difference is not technology. It is execution discipline.

AI adoption becomes disruptive when it is layered on top of existing processes without alignment. It becomes effective when it is introduced as part of how work already gets done, with clear ownership, defined use cases, and measurable outcomes.

This is a practical guide to doing that.

AI Proficiency: Establish Capability Before Deployment

Before introducing AI into workflows, businesses need to validate whether teams can actually use it correctly. This is where most implementations fail early, not because the tools are complex, but because usage is inconsistent.

This gap between access and effective use is often misunderstood. As Larridin notes, “Adoption measures access. Proficiency measures capability.” The distinction matters operationally. Many organizations deploy AI tools widely, but only a small portion of teams are AI proficient enough to generate reliable, production-ready output.

Assessing AI Readiness in Real Tasks

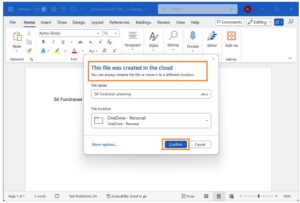

Instead of running theoretical training, test AI usage directly inside existing workflows.

Take a specific function, for example, customer support or reporting, and assign a standard task to multiple team members using AI tools. Compare the outputs against baseline performance.

Look at accuracy, time saved, and the level of correction required. In many cases, teams produce faster outputs but introduce new errors, which cancels out any efficiency gain.

This type of internal testing provides a clear signal of where AI can be introduced safely and where additional structure is required.

Define How AI Should Be Used

Unstructured usage creates inconsistency. One employee uses AI for drafting, another for summarizing, another avoids it entirely. This leads to uneven output across teams.

Businesses that avoid disruption define usage at process level. For example, in reporting workflows, AI may be used to generate first drafts, but final validation remains manual. In customer support, AI may suggest responses, but agents review before sending.

This is not about restricting use. It is about standardizing it.

Build Role-Specific Capability

AI proficiency is not uniform. Finance teams require higher accuracy and auditability than marketing teams. Operations teams need outputs that integrate into systems, not standalone responses.

This means training and usage guidelines must be tied to roles. A generic rollout leads to misuse. A structured rollout aligned with job function produces consistent results.

Start With One Process, Not the Whole Business

Introducing AI across an entire organization creates unnecessary risk. The correct approach is to start with one process that is measurable and repeatable.

Identify a Process With Clear Boundaries

The best starting point is a workflow that already exists, produces consistent output, and has defined inputs.

Examples include monthly reporting, invoice processing, or internal knowledge retrieval. These processes are structured enough to support automation but still consume significant time.

Avoid starting with loosely defined tasks such as “improving decision-making” or “enhancing strategy.” These are not operational entry points.

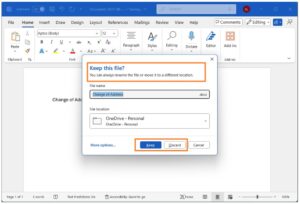

Map the Current Workflow

Before introducing AI, document how the process currently works. Identify where time is spent, where delays occur, and where errors are introduced. This creates a baseline.

Without this step, it is not possible to measure whether AI improves performance or simply shifts work from one part of the process to another.

Introduce AI as a Layer, Not a Replacement

AI should first be introduced as an assistive layer.

For example, in reporting, AI can generate initial summaries based on data inputs. The analyst then reviews and finalizes the output. Over time, as accuracy improves, the level of manual intervention can be reduced.

This approach avoids disruption because it does not remove existing controls. It enhances them.

Introduce AI Into Existing Systems

AI tools that sit outside core systems rarely produce lasting impact. Teams use them inconsistently, and outputs are not connected to operational workflows.

Integration is what determines whether AI becomes part of the business or remains an experiment.

Work Inside Current Platforms

AI should be embedded into systems already used by the business, such as CRM, ERP, or internal dashboards.

For example, instead of generating insights in a separate tool, integrate AI into the reporting system so outputs are available where decisions are made.

This reduces friction and increases adoption.

Align With Data Sources

AI performance depends on data quality. If data is fragmented across systems, outputs will be inconsistent.

Before scaling AI, ensure that the data used in the target process is reliable and accessible. This often requires basic integration work, consolidating data sources, standardizing formats, and ensuring updates are synchronized.

Skipping this step leads to unreliable outputs and loss of trust in the system.

Redesign the Workflow Where Needed

AI changes how work is performed. Ignoring this creates inefficiencies.

For example, if AI generates draft outputs, approval processes may need to be adjusted. If tasks are partially automated, roles may shift from execution to validation.

These changes should be defined explicitly. Otherwise, teams continue working as before, and AI becomes an additional step rather than an improvement.

Manage Risk Without Slowing Execution

AI introduces operational risks, primarily around accuracy, compliance, and data handling. These need to be managed, but not in a way that blocks adoption.

Define Validation Points

Not all outputs require the same level of oversight.

High-risk outputs, such as financial reports or compliance documents, should include mandatory human validation. Lower-risk outputs, such as internal summaries, can be automated with periodic review.

This creates a tiered approach where control is applied where it matters most.

Monitor Output Quality

AI systems require ongoing monitoring. This is not a one-time implementation.

Track error rates, rework levels, and user feedback. If performance degrades, identify whether the issue is data quality, prompt structure, or workflow design.

This turns AI into a managed system rather than a static tool.

Avoid Overengineering Governance

Excessive approval layers and complex policies slow adoption and reduce usage.

Effective governance focuses on clarity. Define what is allowed, what requires approval, and who is responsible. Keep it simple enough that teams can operate without friction.

Scale Based on Measurable Results

Once a process is working reliably, scaling becomes a controlled exercise.

Expand to Adjacent Processes

Do not jump to unrelated areas. Extend AI usage to processes that are similar in structure.

For example, if AI improves reporting, apply the same approach to forecasting or data analysis. This leverages existing capability and reduces implementation risk.

Measure Business Impact, Not Activity

Adoption metrics alone are not useful. What matters is impact.

Track changes in time to complete tasks, reduction in errors, and overall output volume. Compare these against the baseline established before implementation. If these metrics do not improve, scaling should pause until issues are resolved.

Assign Clear Ownership

Every AI-enabled process must have an owner.

This includes responsibility for performance, integration, and ongoing improvement. Without ownership, systems degrade over time and revert to inconsistent usage.

The Practical Outcome to Introduce AI to Your Company

Introducing AI without disruption is not about minimizing change. It is about controlling it.

Start with capability, not tools. Validate proficiency before deployment. Introduce AI into one defined process, measure results, and integrate it into existing systems.

From there, expand incrementally, maintaining control over performance and risk. Businesses that follow this approach do not treat AI as a separate initiative. They treat it as part of how work is executed.

That is what allows AI to improve operations without disrupting them.

Frequently Asked Questions

- Why does AI adoption become disruptive in a business?

AI adoption becomes disruptive when it is layered on top of existing processes without alignment. It becomes effective when it is introduced as part of how work already gets done, with clear ownership, defined use cases, and measurable outcomes. - Why should a business start with one process instead of the whole business?

Introducing AI across an entire organization creates unnecessary risk. The correct approach is to start with one process that is measurable and repeatable.

3. What should businesses measure when scaling AI?

Businesses should measure impact, including changes in time to complete tasks, reduction in errors, and overall output volume, rather than relying on adoption metrics alone.