Editors’ note: Welcome to CNET’s new series of guest columns called Alt View, a forum for a diverse array of experts and luminaries to share their insights into the rapidly evolving field of artificial intelligence. For more AI coverage, check out CNET’s AI Atlas.

“How are you using AI?” I asked a class full of executives. Some of the answers I have heard before: health professionals using it to read medical images; managers using it to draft emails; a retail company using it to take notes in meetings before giving up on it when they realized that the AI confabulated and had no understanding of context. And then, a gem. There’s almost always a gem.

“I use chatbots as fortune tellers,” said a middle-aged Asian woman with a beige cardigan and white sneakers. I would later learn that she has built a billion-dollar empire. A nervous rustle spreads throughout the room as people shift uncomfortably in their seats. “Just like we used to read tea leaves, you can ask AI about the future, and it can be surprisingly accurate. For example, it recently correctly predicted a 2% rise in the stock market,” the student said, nodding and looking around the room while her classmates avoid eye contact.

Today’s ruling soothsayers are no longer astrologers, astronomers, sociologists or even economists; they are computer scientists, data analysts and engineers. Algorithms are the new tea leaves, animal entrails and stars through which we hope to catch a glimpse of the future.

We tend to associate predictions with knowledge, but all too often, they are closer to the realm of power. Prophecies are the boxing ring in which fights over the future take place. Our expectations bend the social world toward our predictions. When someone forecasts that the world will be a certain way, they are commanding that others obey their wishes and bring that world about. Even though we have been using predictions for thousands of years to make some of the most important decisions of our lives, we have dedicated remarkably little thought to the deeper questions about prophecy. Thousands of books have been written about how to predict, but none about the ethics of prediction.

Prediction has become a major industry. Take, for instance, platforms like Polymarket, which aggregate public expectations about future events, collecting massive amounts of data and creating influence. If 58% of users believe that the Oklahoma City Thunder are going to win the NBA Championship title, why would you bet against the majority? But the betting on these platforms extends far beyond sports or even reality TV. It has turned political instability, natural disasters and human suffering into a spectacle, dehumanizing the real-life victims, gamifying life.

Today, predictions have evolved into weapons of power that justify value-laden decisions under the pretense of facts, but predictions are never facts. Facts belong to the present and the past. An assertion about the future can be many things — an estimate, a desire, a warning — but never a fact.

What makes the future the future is that it hasn’t yet happened. What hasn’t come to pass doesn’t exist, and there are no facts about what doesn’t exist. Yet we’re using prediction more than ever with AI, prediction markets and experts talking about the future.

The fantasy of defeating uncertainty

Pierre-Simon Laplace had a dream, often referred to as Laplace’s demon. It occurred to him that, with enough data and compute, it would be possible to achieve complete knowledge. If you knew the exact location and momentum of every particle in the universe, as well as all the laws of nature, then you would be able to predict the future with perfect accuracy. Uncertainty would be defeated at last. As Laplace put it:

Given for one instant an intelligence which could comprehend all the forces by which nature is animated and the respective situation of the beings who compose it — an intelligence sufficiently vast to submit these data to analysis — it would embrace in the same formula the movements of the greatest bodies of the universe and those of the lightest atom; for it, nothing would be uncertain and the future, as the past, would be present to its eyes.

Supporters of AI may not put it in these words, but what they seem to suggest when they enthuse about the power of machine learning plus vast amounts of data is that these technologies are bringing us tantalizingly close to realizing Laplace’s demon. If we can collect every single data point, the thought goes, and we can build enough compute to analyze that data, we can forecast what was previously unforeseeable. Such predictive power promises to revolutionize all fields of knowledge, from medicine to climate change and politics.

Driven by this fantasy, the quantifiers are tracking your every move; recording, tabulating and exhaustively analyzing your pleasures and vices; torturing your data until it screams out in confession. You are being tracked while you drive, search online, do sports, have sex, drink alcohol, do drugs, travel, sleep, talk with your friends and family, spend time on social media, go to the doctor’s office, play online games, read, watch television and breathe.

We manage and discuss our fears in quantified terms: the probability of getting cancer, or getting robbed, of earthquakes happening, or another pandemic, of climate change making our world unlivable, of another world war.

The unbridled optimism to defeat uncertainty through AI is understandable. Computers, data and statistics have brought incredible breakthroughs. The computer Bombe broke the Nazi’s Enigma cipher. In medicine, regression analysis was instrumental in identifying risk factors for diseases. Mainframe computers delivered new insights about business; centralized data processing brought real-time transaction processing and scalability. Manufacturing firms gained the ability to monitor production efficiency across entire supply chains, identifying bottlenecks and improving resource allocation.

Personal computers emerged in the 1980s. The 1990s and 2000s saw the rise of the internet and cloud computing, further increasing data availability and processing power. The 2010s marked a turning point with the practical application of deep learning, fueled by big data and improved hardware like GPUs. Advances in algorithms paved the way for machine learning — prediction machines.

AI and prediction: a power play

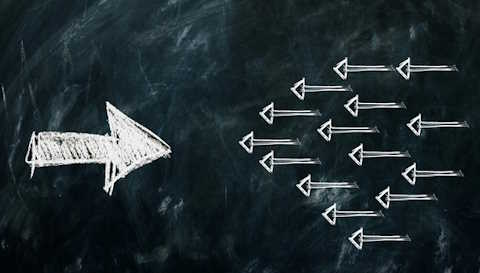

With prediction come all the patterns of prophecy and power that paper our history books. The difference is that AI is prediction on steroids, and we are using it not only on the battlefield and in the doctor’s office but everywhere, from the office to the classroom, the courtroom, our roads, our love lives and beyond.

Machine learning algorithms are predictive machines. That is all they do, whether they are engaging in regression, classification or language. When a machine learning system translates text, it is predicting the most likely translation based on millions of examples of previous translations. When it recognizes wolves in photos, it does so by predicting the probability that a given image contains a wolf, based on patterns it learned from thousands of images labeled wolf and not-wolf. When a large language model answers a question, it is predicting what a human being would say in its place, based on the statistical analysis of books, online forums, social media and so forth.

It’s no wonder that an “oracle” is a technical term in the context of machine learning. An oracle represents the best possible performance that could be achieved; it’s an idealized function that always provides perfect predictions.

The triumph of machine learning is a corporate victory much more than a scientific one. Idealists might find it anticlimactic, even depressing. Someone wanting to put it crassly might say that we simply threw money at the problem.

What is most remarkable about the success of machine learning is how unremarkably it came about. “What’s disappointing,” said Michael Wooldridge, professor of AI at Oxford, to a group of my MBA students, “is that it didn’t happen as a result of a scientific breakthrough.” He looked around the room to make sure the weight of his words has landed.

From the 1960s to the early 2000s, the results from neural networks were not very impressive. The symbolic AI gang was winning the race and the grants — until it wasn’t. Something changed: We got more data and more compute, and machine learning took off. In the span of a few years, automatic translation, for instance, went from being unusable to being comprehensible, then good enough to help clueless tourists find their way with no knowledge of the local language. It’s now good enough that I admit I have sometimes preferred an automatic translation to the suggestions of a professional translator who had a weakness for verbosity.

The amazing things that machine learning can do didn’t happen because of greater understanding. It didn’t need any genius. The picture is bleaker than an uninspiring lack of creativity. The means through which such brute force in data and compute was acquired involved theft, the exploitation of vulnerable people, a ferocious use of natural resources and building an architecture of mass surveillance, to name but a few sins.

We might be centuries away from the oracles and astrologers who predated algorithms, but prediction is still mostly about power. Power is how you get predictive algorithms, and more power is what they grant you in return.

From Prophecy: Prediction, Power, and the Fight for the Future, from Ancient Oracles to AI by Carissa Véliz. Reprinted by permission of Doubleday, an imprint of the Knopf Doubleday Publishing Group, a division of Penguin Random House LLC. Copyright © 2026 by Carissa Véliz.